AI Will Not Replace Leadership - But It Will Redesign How Leaders Think

Perspective of a Lead Senior UX Designer & Researcher - Finance, Banking, Telecom, Energy, Nuclear Energy

Chemss Salem

There’s a conversation happening in boardrooms right now that’s pointed in the wrong direction.

Most executives agree that AI will change how organizations operate. The mistake isn’t the conclusion, it’s the question they’re starting from: “Which roles will AI replace?” Because senior roles are complex, relational, and hard to turn into a neat process, many leaders end up deciding: “Probably not mine.”

They’re likely right about that. But they’re missing the real shift.

AI isn’t trying to sit in the executive chair. It’s going after the cognitive scaffolding that chair has always relied on: the information advantage, the monopoly on analysis, the authority that comes from being the person who “gets” the system better than anyone else. That foundation is what’s disappearing. Quietly, quickly, and in organizations that have already invested heavily in AI, it’s largely gone.

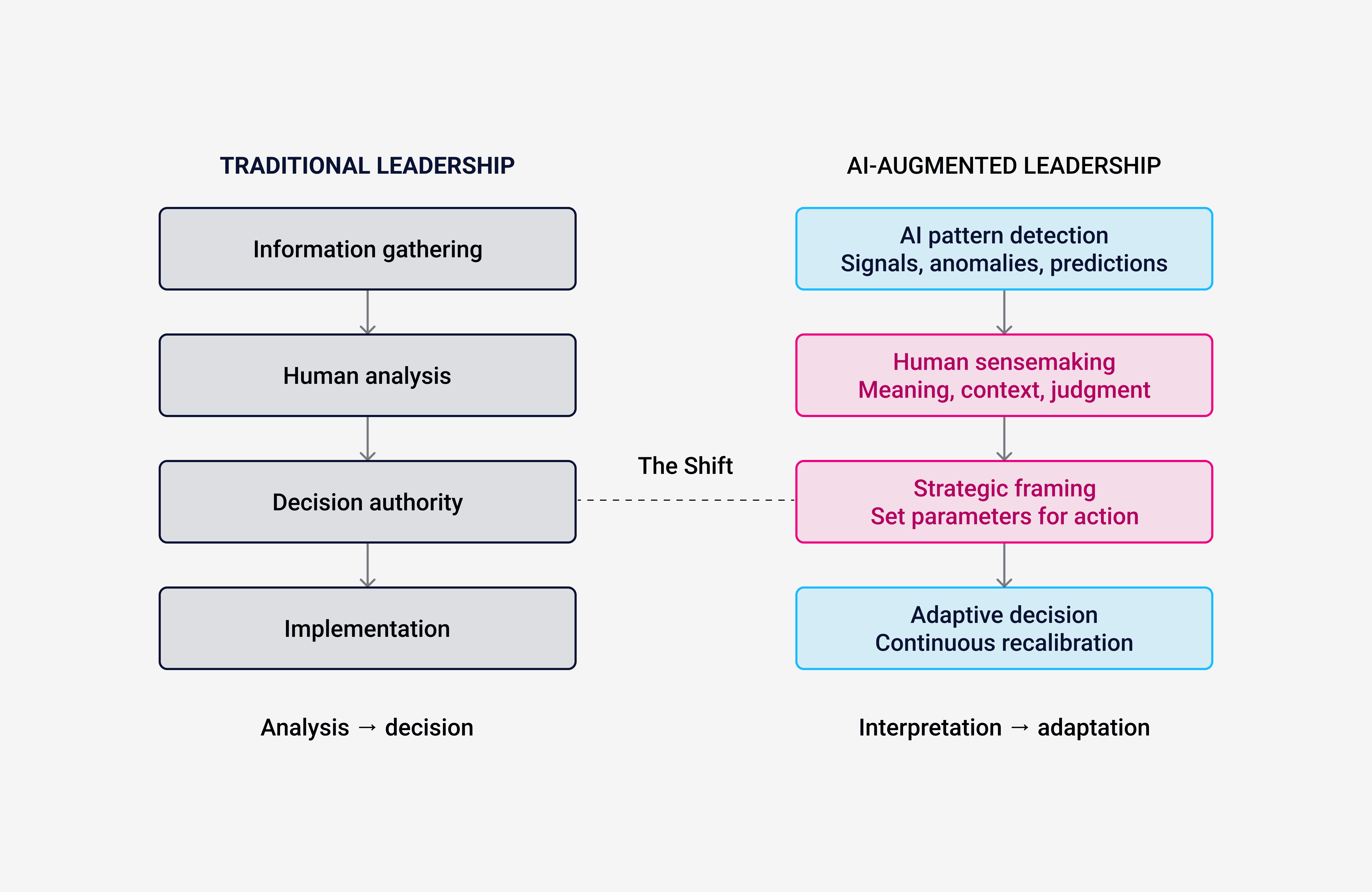

Working across banking infrastructures, telecom platforms, and nuclear energy governance, I don’t see a story about leaders being replaced. I see a story about how thinking work is being redistributed. The work of leadership hasn’t vanished. It’s shifting away from analysis and toward interpretation. Away from knowing what’s happening and toward deciding what it means.

That shift doesn’t happen automatically. It depends on leaders who understand, in a fairly hands-on way, what AI can and can’t do. In my experience, most senior people in regulated environments don’t yet have that understanding. They’ve used the tools. That’s not the same thing.

The Pillar That's Gone

Historically, leadership authority in complex institutions rested on three pillars:

Experience: having lived through cycles, crises, and recoveries that junior staff haven’t seen.

Hierarchical authority: the formal power to make binding decisions.

Information advantage: being the person with privileged access to numbers, forecasts, and internal data.

AI hasn’t really touched the first two. If anything, experience is more valuable in an AI-augmented world, because it’s what lets you see when a model’s output is technically correct but wrong for the institution. Hierarchical authority is still nonnegotiable, especially in regulated sectors where accountability can’t be handed over to a model.

Information advantage, though, is basically gone.

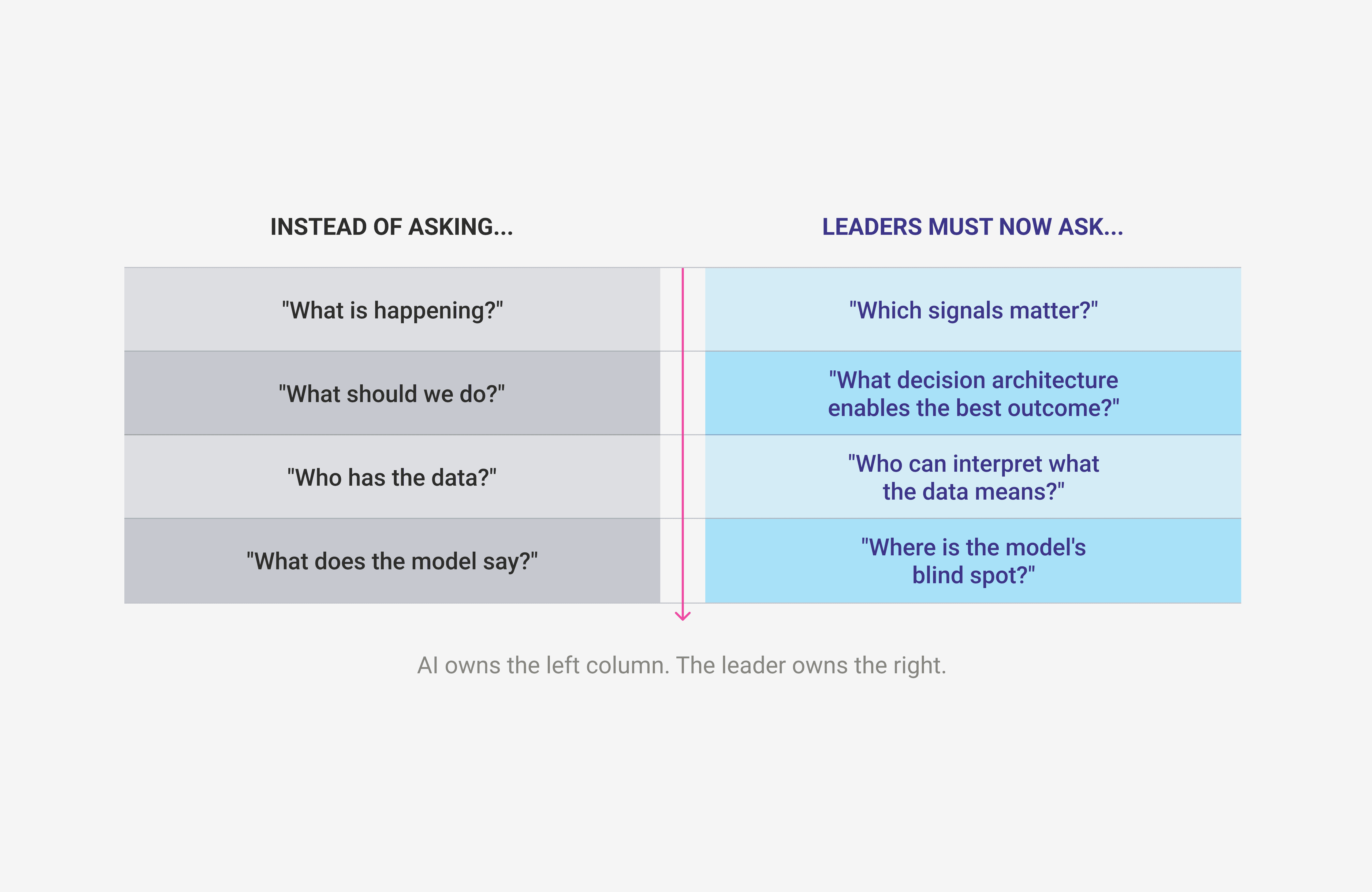

When a junior analyst on your risk team sees the same predictive outputs you do when forecasts, anomaly detection, and scenario models sit on a shared platform your authority can’t rest on simply “knowing more.” In a narrow dataaccess sense, everyone now knows more. The differentiation has moved. It now sits in what you do with the information, not the fact that you can see it.

That’s not a small tweak to how leadership works. It’s a structural change. Senior professionals whose authority has largely come from information advantage and there are more of them than organizations like to admit are facing a real identity crisis, whether they call it that or not.

The ones who are handling it well aren’t just the most fluent with AI tools. They’re the ones who’ve understood that their job itself has changed. They’re doing something meaningfully different: sensemaking instead of pure analysis, framing instead of computing, designing the decision environment instead of just operating inside it.

What Decision Architecture Actually Means

This phrase gets used a lot, so it’s worth being concrete.

Decision architecture isn’t just a fancy name for “good decision making.” It’s about the conditions under which decisions happen:

Which information is surfaced and which is filtered out

Which time horizons are visible and which are invisible

Which stakeholders have a voice early enough to matter and whose input always arrives too late

In a preAI world, decision architecture was mostly implicit. It sat inside org charts, meeting schedules, and reporting lines. It evolved slowly, because the information environment itself evolved slowly.

AI changes that by speeding up the information environment dramatically. In financial risk management, key signals for a given decision now update almost in real time. In energy grid management, data that once appeared in weekly reports now streams in continuously. In telecom, performance anomalies that used to show up in a monthly review are visible as they happen.

Most large institutions are still running a decision architecture that was built for a slower world. It assumes information arrives at a pace a hierarchical review process can keep up with. In the sectors I work in, that world doesn’t really exist anymore.

What I see over and over again are organizations that upgraded their data infrastructure but left their decision infrastructure untouched. They’ve bought and built AI tools that generate better signals, faster. They haven’t redesigned the path from signal to decision. The outcome isn’t better decisions. It’s faster confusion.

Fixing this isn’t mainly a tech problem. It’s a leadership design problem. It needs leaders who see their role as architecting the way judgment is applied, not being the primary source of judgment for every important call.

The shift from “analyzing” to “sensemaking,” from “deciding in the room” to “designing the room,” is not a matter of semantics. It marks a real relocation of cognitive load. AI tackles the first question in each pair. The leader owns the second.

The Failure Modes I Keep Seeing

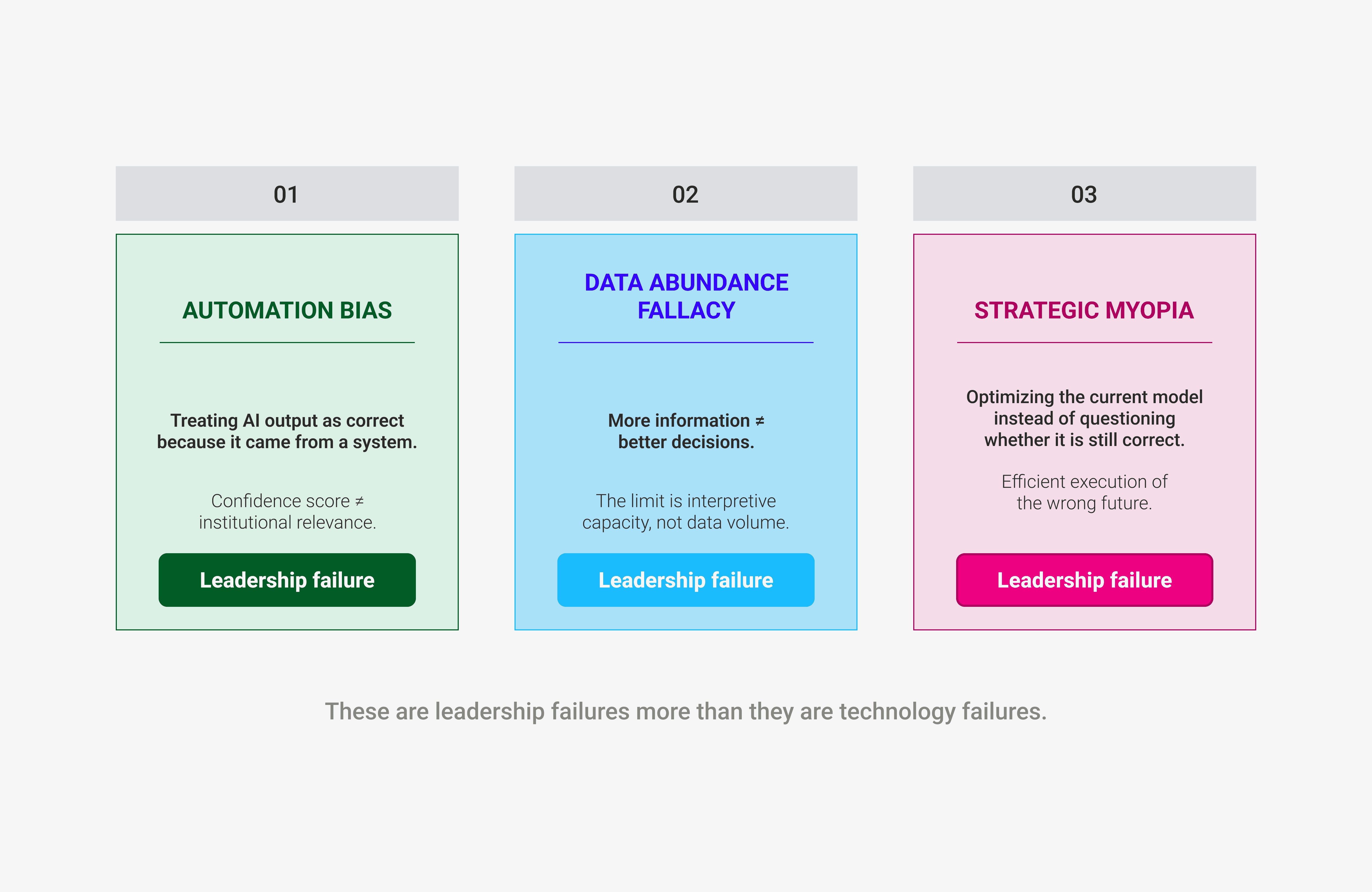

Three patterns show up so consistently across sectors that they’re worth naming.

Automation bias

This is the tendency to treat an AI output as correct just because it came from a system instead of a person. It’s especially risky in regulated environments, where a model can be technically accurate on its training data but out of date relative to the current regulatory landscape. I’ve seen risk recommendations move up the hierarchy with barely any challenge because the model showed a high confidence score. That score tells you how internally consistent the model feels its answer is not how relevant it is for your institution. Those are different things.The data abundance fallacy

This is the belief that more information automatically leads to better decisions. It doesn’t. In executive work on complex systems, the bottleneck is rarely data volume. It’s interpretive capacity. When organizations flood senior leaders with dashboards, they’re not empowering them; they’re overwhelming them. The AI layer that matters most is often not the one generating more signals, but the one filtering them.Strategic myopia disguised as efficiency

AI makes it very easy to optimize local performance within the current assumptions. It’s much less useful at asking whether those assumptions are still right. Organizations that lean on AI mainly to do the current thing better rather than questioning what the “current thing” should be build very efficient versions of the wrong future.

These are leadership failures more than technology failures. The tools are doing what they were designed to do. The breakdown is happening in the judgment layer above them.

These are leadership failures more than they are technology failures. The tools are functioning as designed. The judgment layer above them is where the breakdown is occurring.

The UX of Executive Decision-Making

There’s another aspect of this shift that rarely comes up outside design circles, and it deserves attention.

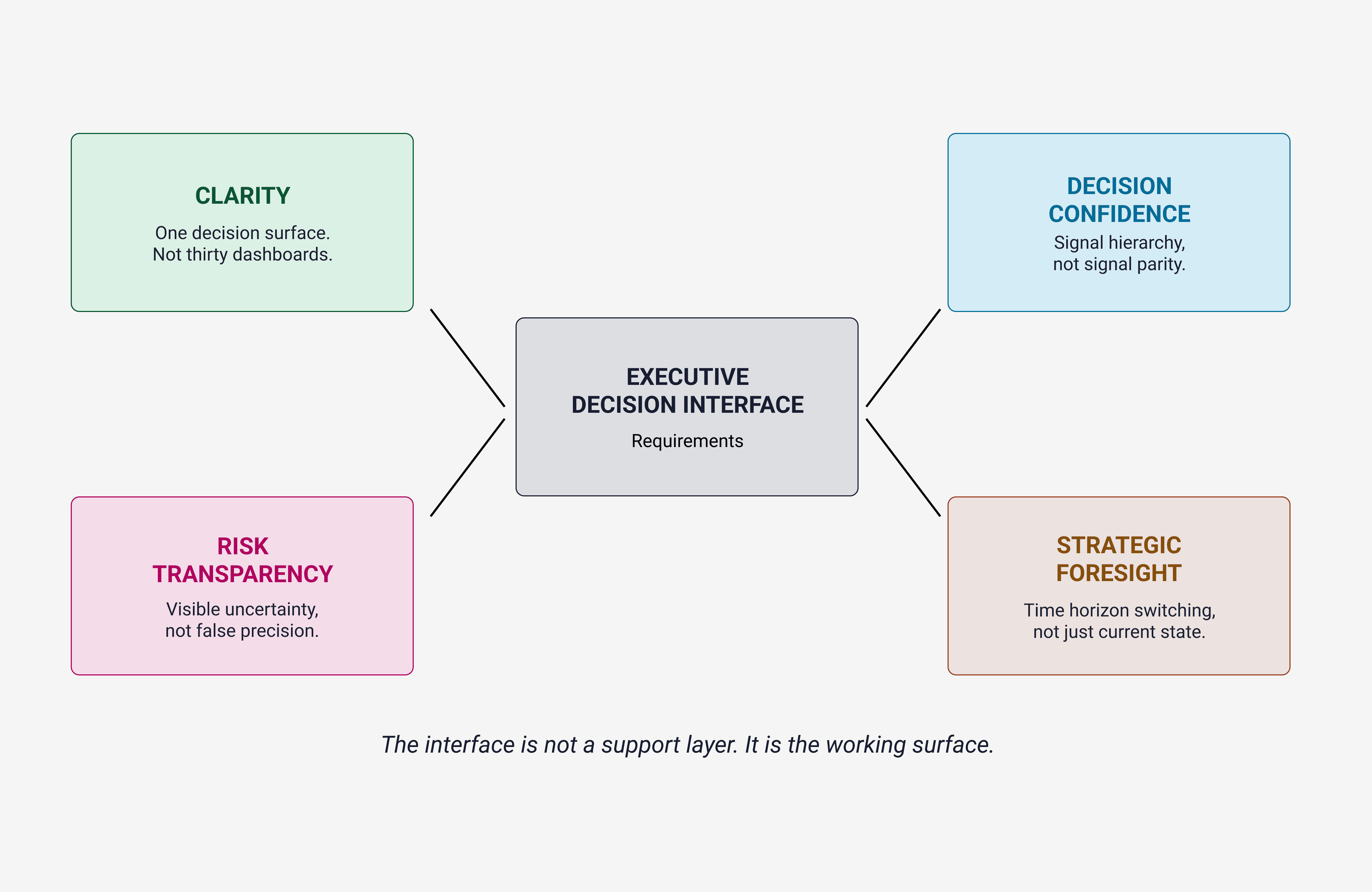

AI has changed what it feels like to be a leader. The interface through which executives operate the dashboards, scenario tools, AIgenerated briefs has become the main place where strategic thinking happens. It’s not a support layer anymore. It’s the working surface.

In most organizations, that working surface wasn’t designed with much thought for how people actually think and decide under pressure.

A dashboard with twenty signals shown with equal visual weight isn’t neutral. It quietly tells you that all twenty are equally important, and that’s wrong as often as it’s right. The way executive interfaces are designed bakes in assumptions about what matters, which time horizons count, and how much uncertainty is acceptable to show. Those choices are often made by default by whoever configured the BI tool rather than through deliberate design.

Working in nuclear energy governance has made this feel very concrete for me in a way that banking never quite did. The cognitive load of managing a safetycritical system in real time is different in kind, not just degree, from financial risk management. Decisions about which signals to surface and how to show uncertainty so it neither freezes nor falsely reassures a decision maker are not cosmetic. They are governance questions.

The organizations that handle this well have realised that. They invest in designing executive decision environments with the same seriousness they apply to building their AI models. Most organizations don’t. That gap is real, and it’s growing.

The direction of travel, when it’s done well, looks like this:

Clarity: one main decision surface, not thirty dashboards.

Decision confidence: a clear signal hierarchy, not every metric shouting at the same volume.

Risk transparency: visible uncertainty, not fake precision.

Strategic foresight: the ability to move between time horizons, not just stare at the current state.

Speed, feedback, and everyday judgment

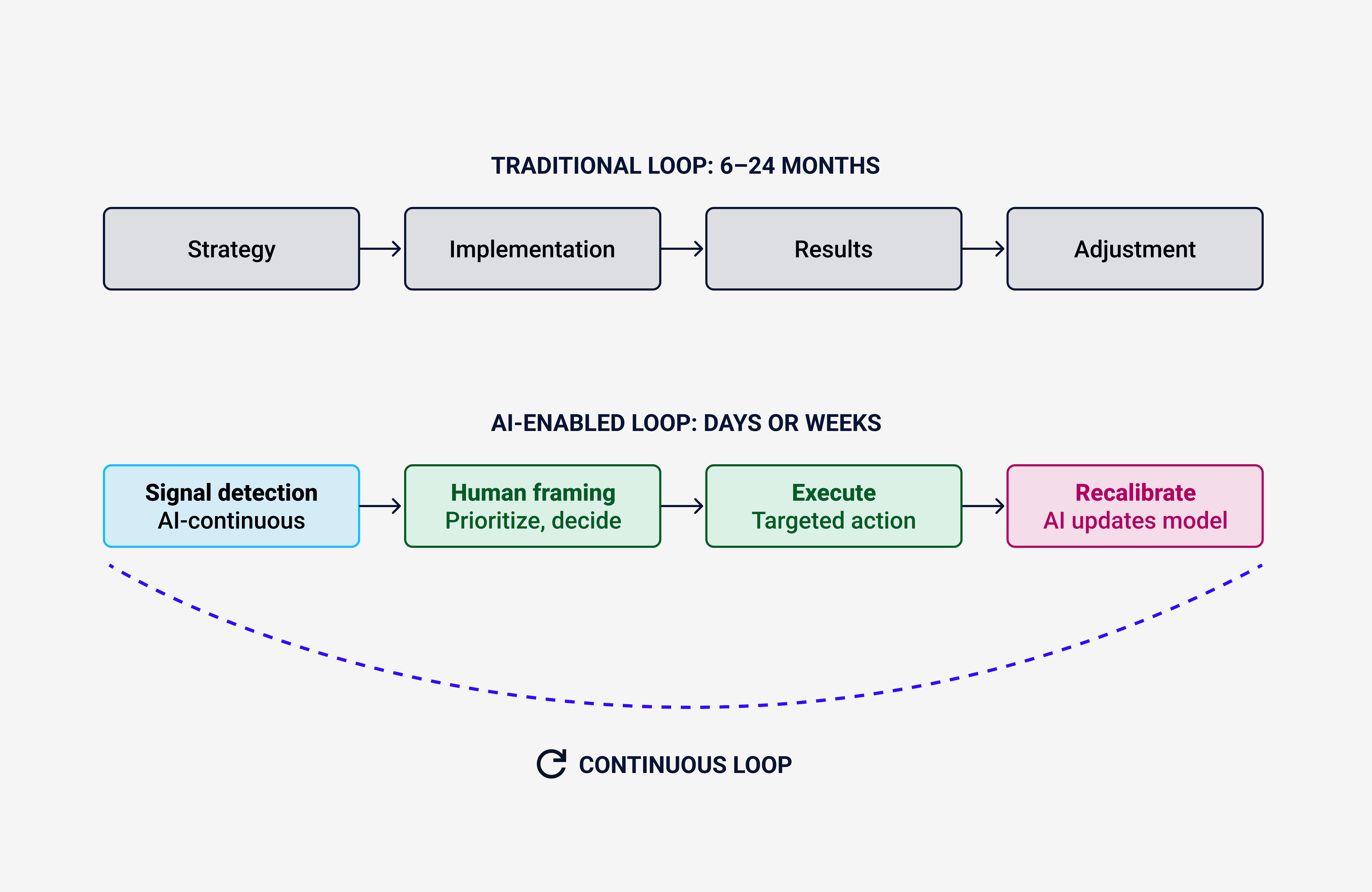

The last shift I want to touch on is the feedback loop, because it changes what “good leadership” actually means.

Traditional strategy cycles moved on a six to twenty four month rhythm. Leaders set a direction, executed, then waited long enough to see results before adjusting. That delay, clumsy as it was, offered a kind of builtin protection from bad judgment: there was time to observe, reflect, and course correct before mistakes became irreversible.

AI compresses that loop.

Feedback now arrives in days or weeks. Leadership quality is measured less by the occasional brilliant decision and more by the average quality of judgment under continuous pressure.

A fast feedback loop is an advantage for organizations with solid judgment processes. For ones with weak processes, it’s an accelerant. They don’t just go wrong; they get to the wrong place faster.

This creates an environment where a leader’s everyday judgment matters more than ever. Not the one impressive decision you talk about in a case study, but the stream of decisions you make under time pressure, with incomplete information, while AI surfaces signals faster than the institution was built to digest.

The leaders I see doing well in this world tend to share a few traits. They’re genuinely comfortable with uncertainty not just performing confidence, but able to hold a view firmly and still revise it when the evidence shifts. They have a calibrated skepticism toward AI outputs: neither automatic trust nor automatic distrust. And they see their most important job as designing the conditions for good answers to emerge, not being the person who always has the answer.

The New Leadership Mindset

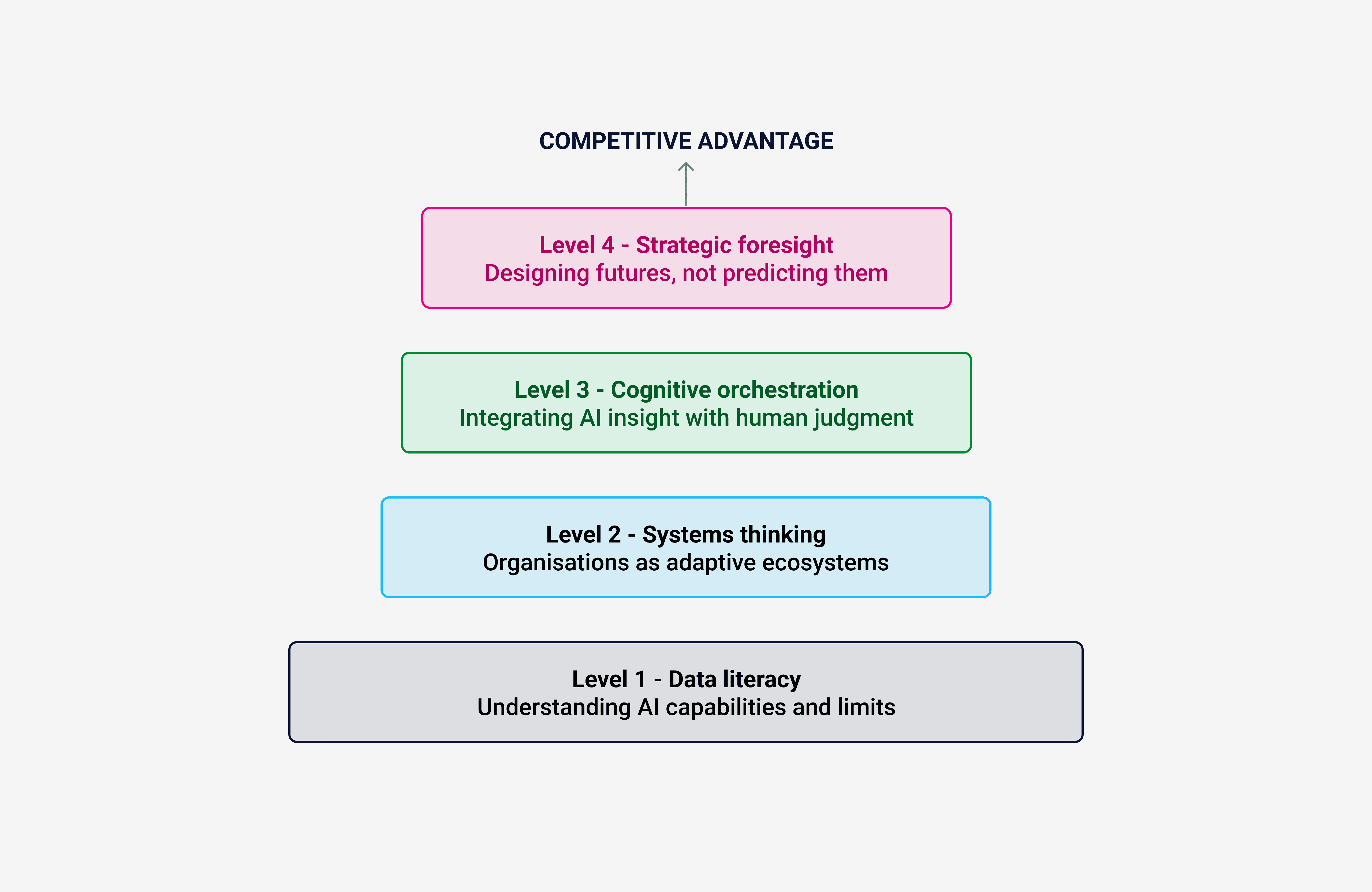

I don’t want to pretend there’s a neat framework that magically makes this easy. Reality is messier than that. But there is a helpful way to think about the shift in competence that’s required.

Think of the “stack” of leadership capabilities not as a ladder you climb once, but as layers you need to operate across at the same time. A leader who’s strong at strategic foresight but weak on data literacy will misread AI outputs. A leader who understands the data but can’t orchestrate cognitive work across people and systems will stay reactive.

What matters is the synthesis, not any single layer.

Leadership isn’t about being the smartest person in the room anymore. It’s about building the smartest system around you.

Leaders in AIaugmented organizations will work from a different mental model. Not a slightly improved version of the old one a different one. Decision makers become sensemakers. Controllers become system architects. Authority figures become orchestrators of human and machine intelligence working side by side.

It’s worth being honest about the scale of this shift. Pieces like this often end on a cautiously optimistic note that the evidence doesn’t really justify.

The cognitive shift I’ve described is real and already underway. In the sectors I work in finance, energy, telecom organizations that have seriously invested in AI are already operating in this environment. Their decision cycles are faster. Their information environments are richer. And the gap between leaders who’ve adapted their mental model and those who haven’t is already visible in outcomes.

The leaders who thrive aren’t necessarily the most technical. They’re the ones who’ve accepted that their job has changed at a structural level. They act as sensemakers, system architects, and cognitive orchestrators of human and machine intelligence in parallel.

The leaders who struggle aren’t failing because AI is too powerful or too opaque. They’re failing because they’re trying to do a different job with the same mental model they built over the past twenty years. The tools changed. Their assumptions about what leadership is for did not.

AI will not replace leadership.

But it will quietly make certain models of leadership obsolete. Not with a dramatic break, but steadily, without much fanfare. The executives who are paying attention in the specific, unglamorous sense of redesigning how they think, not just which tools they use are building organizations that still make sense five years from now.

The rest will keep optimizing efficiently toward a future that has already disappeared.s.

Substack link - ClickHere ….

About the author

© Chemsseddine SALEM is a Lead UX Researcher & Designer specialising in Enterprise SaaS, UX Governance, Finance, and Energy sectors. He is the founder of Chemss Labs and works with organisations navigating largescale digital transformation in regulated environments.

About

Featured Posts