The Foundations of UX Trust: Designing Confidence in Regulated Industries

Designing trust in UX means crafting clarity, transparency, and accountability, especially in finance, energy, and healthcare industries.

Chemss Salem

Trust is the invisible architecture of every digital experience in regulated industries. Not usability. Not visual appeal. Trust.

When a user logs into a mobile banking application, reviews an energy bill, or shares medical records through a government portal, they are not primarily evaluating design quality. They are asking a more fundamental question: do I believe this system will do what it says? That question is answered unconsciously, continuously by every design decision in the product. Typography. Microcopy. Loading states. Error messages. Authentication flows. Each one is either building credibility or eroding it.

The 2024 PwC Digital Trust report found that over 85% of users in regulated markets said trust in digital services directly influenced their willingness to adopt or recommend a platform. Only 42% said speed or visual appeal had the same effect. In financial services, energy, healthcare, and public services, trust is not a secondary consideration it is the primary metric by which users evaluate whether a system deserves their continued engagement.

This article examines the structural, psychological, and operational foundations of UX trust in regulated environments what produces it, what destroys it, and what the evidence from real programmes shows actually works.

1. Why Trust Is the Primary Metric in Regulated Contexts

Most product teams measure UX success through task completion, satisfaction scores, or error rates. In regulated industries, those metrics are downstream of a more fundamental condition: whether the user believes the system is safe, honest, and competent.

A user will tolerate a longer task flow. A user will not tolerate a moment of doubt about whether their financial data is secure, their medical records are private, or their energy contract is being handled accurately. The tolerance threshold for uncertainty in these contexts is structurally lower than in consumer products because the consequences of getting it wrong are structurally higher.

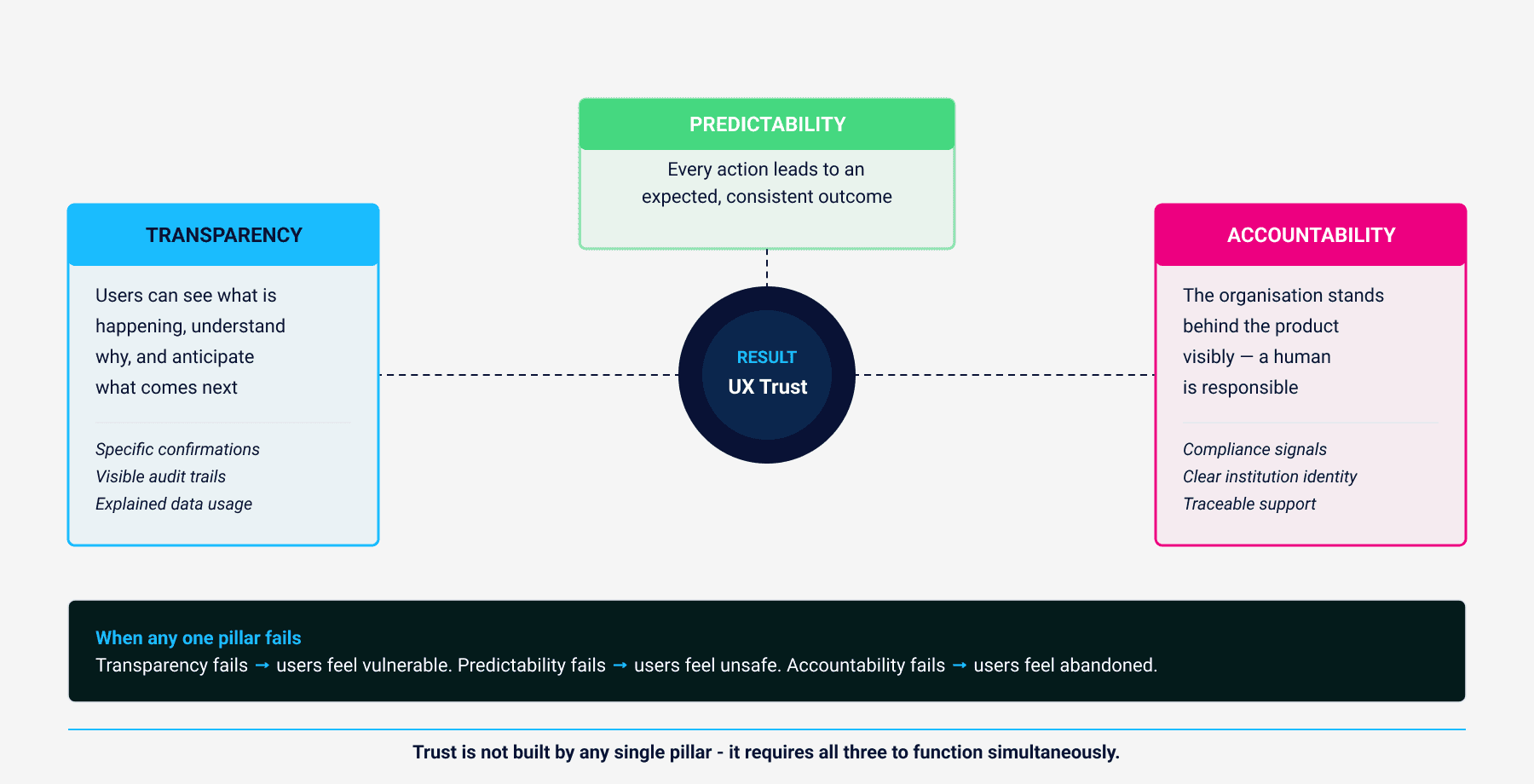

Designing for trust means designing for what cognitive psychologists call reliability perception: the combination of transparency, predictability, and accountability that allows users to form stable, positive expectations about a system’s behaviour over time.

2. The Three Pillars of UX Trust

Trustworthy UX rests on three interdependent conditions, each of which can be designed for deliberately.

Transparency means users can see what is happening, understand why, and anticipate what comes next. In financial and energy applications, this is not about disclosure documentation it is about interface-level clarity. A transaction confirmation that reads “Your wire transfer of €1,200 to HSBC UK has been securely processed. Expected arrival: 24 hours” does more for trust than any privacy policy page. The specificity signals competence. The structure signals predictability. The detail eliminates the gap between user expectation and system behaviour where anxiety lives.

Predictability means every action leads to an expected and consistent outcome. Buttons behave identically across screens. Navigation patterns do not surprise. Error messages follow established norms. This becomes critical at failure points timeouts, authentication errors, declined transactions where a user’s trust model is most fragile. A transparent, empathetic error message preserves trust at the moment it is most at risk. A vague or technical one destroys it permanently.

Accountability means the organisation behind the interface stands behind its product visibly. Compliance signals ISO certification marks, GDPR-compliant handling statements, visible support channels communicate that a human institution is responsible, not just an algorithm. The goal is to make users feel that if something goes wrong, someone specific is accountable and reachable. That feeling cannot be faked with design alone but it can be structurally reinforced through design.

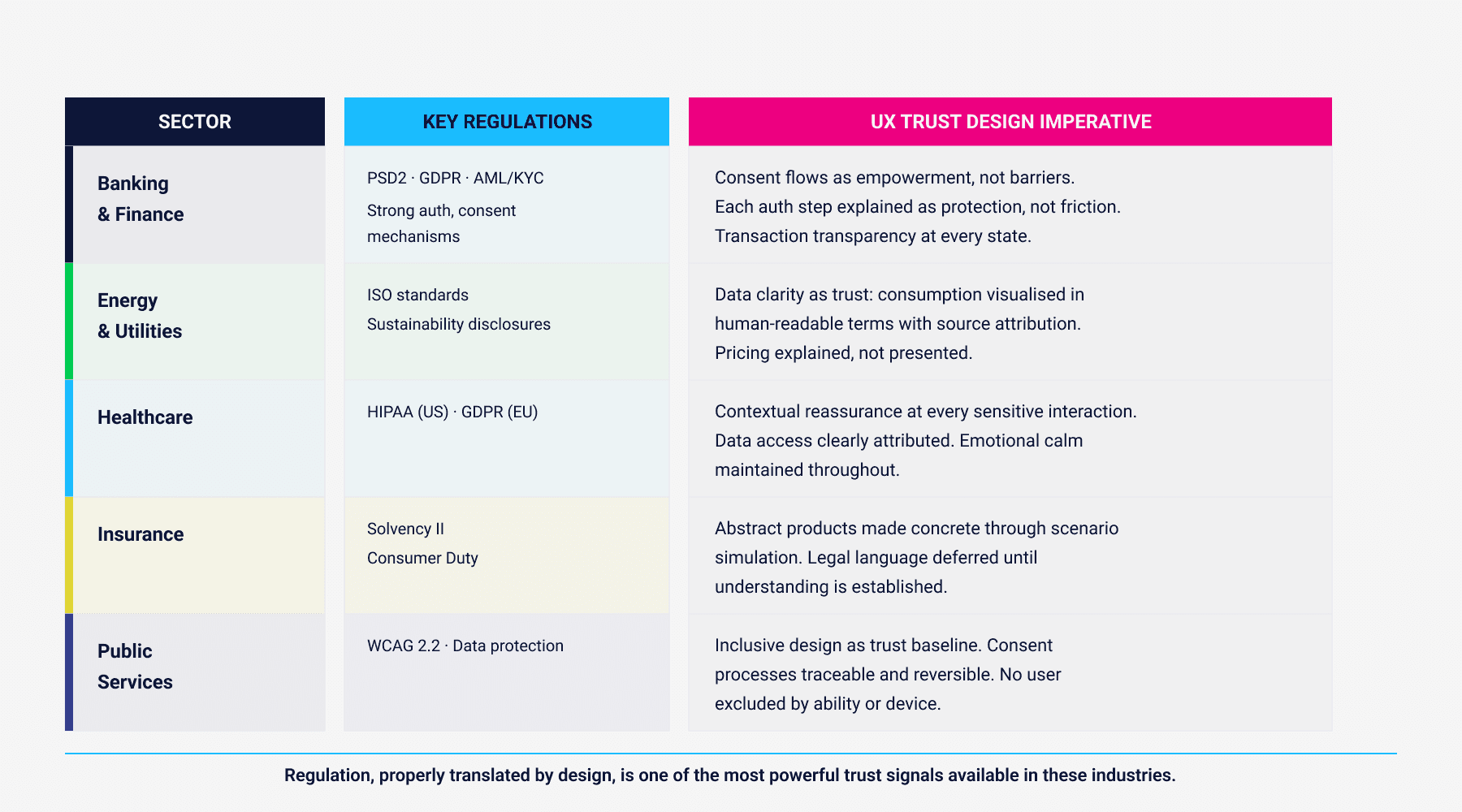

3. How Regulation Shapes UX Trust - for Better and Worse

Every regulated sector imposes legal frameworks that directly shape user experience, whether or not the design team accounts for them explicitly.

PSD2 in banking requires explicit consent for third-party data access. GDPR across all sectors creates data transparency obligations. HIPAA in US healthcare and equivalent EU frameworks govern how patient data is accessed and communicated. The EU Accessibility Act and WCAG 2.2 define minimum standards for inclusive design. Each regulation creates a structural condition that UX must translate into a coherent user experience not just a compliant one.

The failure mode is treating compliance as a gate. When legal requirements are implemented as friction-generating form fields, consent walls, and mandatory disclosures dropped into user flows without design consideration, they become trust obstacles rather than trust builders. A KYC verification step presented abruptly signals bureaucratic indifference. The same step presented as “For your security, we verify your identity using encrypted, regulated systems this ensures your funds are always protected” becomes a trust-reinforcing moment. The regulatory requirement is identical. The user experience of that requirement is entirely a design decision.

Regulation, properly translated by design, is one of the most powerful trust signals available in these industries. Most organisations leave it unused.

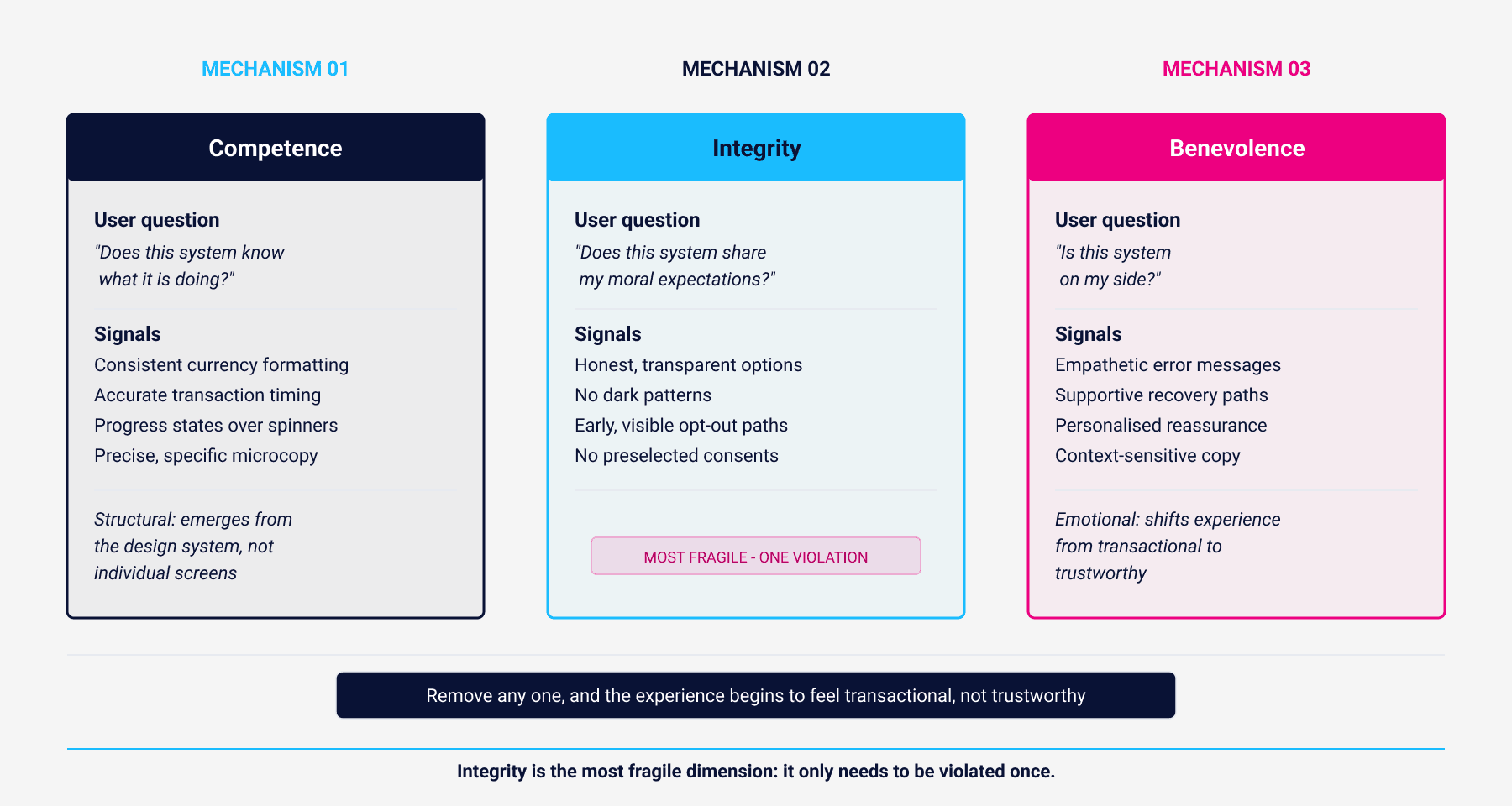

4. The Psychology of Digital Trust

Cognitive psychology identifies three mechanisms that determine whether users extend trust to a system: competence, integrity, and benevolence. These are not sequential they operate simultaneously, and the absence of any one collapses the others.

Competence is the user’s inference that the system knows what it is doing. It is communicated through precision, speed, and clarity consistent currency formatting, accurate transaction timing, loading states that convey progress rather than uncertainty. Competence signals are structural: they emerge from the design system, not from individual screens.

Integrity is the user’s judgement that the system aligns with their moral expectations. Honest, transparent options build it. Manipulative patterns preselected consents, deliberately obscured opt-out paths, urgency framing that manufactures pressure destroy it faster than any positive signal can rebuild it. Integrity is the most fragile of the three dimensions: it only needs to be violated once.

Benevolence is the emotional dimension: the user’s sense that the system is on their side. It is built through empathetic feedback, supportive error states, and personalised reassurance that treats the user as a person with a specific situation rather than an anonymous transaction. “We know this is sensitive your data is encrypted and visible only to you” does more for benevolence than ten security certification badges.

When competence, integrity, and benevolence operate together, the user experience stops feeling transactional and starts feeling trustworthy. That is not a soft outcome. It is the precondition for long-term engagement in industries where users have the option to disengage.

5. Visual Language and Trust Signals

Visual perception accounts for approximately 80% of initial trust signals in digital environments. These signals operate before the user has read a single word of content.

The consistent finding across financial, healthcare, and energy interfaces is that trust is not communicated by elaborate visual design it is communicated by restraint, consistency, and deliberate use of conventional signals. Blues and neutrals in banking interfaces are not aesthetic preferences they are calibrated to evoke the same psychological associations as physical institutional spaces. High contrast and clear typographic hierarchy communicate technical robustness. Whitespace communicates that the system is not hiding complexity.

Iconography is particularly consequential. In A/B tests conducted across fintech products, interfaces that included a lock icon adjacent to payment form fields showed trust perception scores 23% higher than identical forms without the icon. The icon adds no functional information the form is equally secure in both versions. What it adds is a legible visual signal that the system is aware of security as a concern, which in turn signals that the organisation behind it is aware of that concern.

The principle is consistent: trust is communicated by making the invisible visible. Security, accuracy, regulatory compliance these are structural properties that users cannot directly observe. Visual design is the mechanism by which they become perceptible.

6. Content Design as Trust Infrastructure

Language in regulated products is not brand voice work. It is infrastructure. Every piece of microcopy, every error message, every confirmation state either advances or undermines the user’s belief that the system is competent, honest, and on their side.

The failure mode is treating regulatory precision and human clarity as competing objectives. They are not. “Your application is under processing” satisfies neither requirement it is neither legally specific nor humanly reassuring. “We are reviewing your application. You will receive an update within 24 hours” is both: it is specific enough to be accountable and direct enough to be understood.

Tone consistency matters more than most teams acknowledge. An interface that is formal in its transactional flows and casual in its marketing content does not read as versatile it reads as inconsistent. Inconsistency in a regulated context signals that different parts of the organisation have different standards, and users correctly interpret that as a governance problem. The mental model for appropriate tone in these contexts is a knowledgeable, calm professional: someone who speaks plainly about complex things without either oversimplifying or performing expertise.

7. Microinteractions as Trust Signals

The micro-details of an interface loading states, confirmation animations, progress indicators, haptic feedback are not decorative. They are the moment-to-moment signalling system through which users continuously update their trust model.

“We are verifying your identity (Step 2 of 3)” does more work than it appears to. It signals competence (the system knows where it is in a process), transparency (the user can see the same information), and predictability (there are exactly 3 steps the end is visible). A vague spinner communicates none of those things.

In financial applications, the confirmation animation at payment completion the checkmark, the visual state change functions as an emotional anchor. It provides closure before the user reads the confirmation text. In a context where users are psychologically primed to watch for failure, that moment of clear, unambiguous success signal is a trust deposit.

The discipline required is intentionality. Microinteractions that are not deliberately designed tend to default to technically functional but emotionally neutral which in regulated contexts reads as cold, impersonal, and therefore slightly untrustworthy.

8. Measuring Trust

Trust is measurable. Most teams do not measure it because they are not sure how but the methods are established.

A Trust Index derived from Likert-scale user surveys (“I feel safe performing financial actions here”) provides a quantitative baseline that can be tracked over time and correlated with product changes. Drop-off rates during verification flows are a proxy for perceived friction, which is itself a proxy for trust barriers. NPS scores correlated with confidence-related keywords in qualitative feedback identify the trust dimension of advocacy.

Qualitative methods are equally important. Contextual inquiry observing users in realistic scenarios involving financial decisions or sensitive data surfaces trust signals and trust failures that survey instruments miss. Trust-mapping workshops, where teams work through each interaction phase identifying what the user is believing at that moment and what evidence they have for that belief, are particularly effective for regulated products where the stakes of trust failure are high.

The critical discipline is continuous validation. Compliance requirements change. Privacy frameworks evolve. Security standards tighten. A trust architecture that was accurate six months ago may have been undermined by a regulatory update, a product change, or a public incident in the sector. Trust is not a feature to be shipped it is a condition to be maintained.

9. Evidence from Practice: Seven Regulated Environments

The following cases are drawn from publicly documented UX transformation programmes in regulated industries. Each demonstrates a specific mechanism by which trust was designed not assumed.

Revolut - Designing Trust Through Visual Transparency

Industry: Digital Banking & Finance

Region: Global (UK-based, EU-regulated under PSD2 and FCA)

Revolut addressed the trust problem inherent in digital-only banking without physical branches. Their approach was transactional transparency: real-time feedback at every step of a transfer, consistent navigation across platforms, and progressive trust cues that show each verification state explicitly. The result, according to the 2024 YouGov Brand Trust Index, was a top-3 ranking among UK fintechs achieved without institutional legacy.

Monzo - Emotional Honesty and Tone of Voice

Industry: Digital Banking

Region: UK (FCA-regulated)

Monzo recognised that tone carries as much trust signal as visual design. They built a content design framework centred on honesty, clarity, and warmth replacing technically accurate but emotionally alienating error messages with human-scaled explanations. “Looks like there wasn’t enough in your account to make this payment. You can try again once you’ve topped up” accomplishes what a generic decline message cannot: it treats the user as a capable adult, attributes the problem accurately, and provides a clear path forward. Their NPS exceeded 70 in 2023.

EDF Energy - Trust Through Data Clarity

Industry: Energy & Utilities

Region: France / UK / EU-regulated

EDF Energy faced credibility problems around billing transparency and data accuracy. Their redesigned digital dashboard introduced consumption storytelling graphs showing usage over time with contextual comparisons, plain-language billing summaries, and source attribution linking every metric to its underlying data. The result was a 37% increase in digital engagement and a 21% reduction in billing complaints within twelve months.

NHS Digital - Trust in Sensitive Information Systems

Industry: Healthcare

Region: United Kingdom (NHS / GDPR / HIPAA principles)

NHS Digital manages patient records in a context where trust failure carries national consequences. Their approach combined explicit contextual reassurance, accessible multi-factor authentication framed as protection rather than friction, and institutional design consistency across all digital portals. Trust-mapping workshops with patients identified the specific interaction points where anxiety was highest, and those points were redesigned with stepwise reassurance. Satisfaction scores showed a 42% increase in perceived security.

ING Bank - Trust by Design Compliance

Industry: Banking / Financial Services

Region: Netherlands / EU-wide under PSD2 and GDPR

ING Bank used the PSD2 compliance requirement as a trust-building opportunity. Progressive disclosure framing, timed permissions with proactive reminders, and educational overlays explaining the regulatory framework turned a compliance obligation into a demonstration of institutional transparency. Consent completion increased by 33%.

AXA Insurance - Visualizing Reliability in Abstract Products

Industry: Insurance & Risk Management

Region: Global (EU and US markets)

AXA Insurance addressed the fundamental trust problem of intangible products. Interactive policy simulators showing what is covered in realistic scenarios, claim-handling animations demonstrating process transparency, and empathic onboarding that deferred legal language until after the user understood the product increased online policy conversions 25% and reduced claim abandonment 17%.

Swissgrid - Trust Through Operational Transparency

Industry: Energy Infrastructure (National Grid)

Region: Switzerland / EU-regulated

Swissgrid managing national power infrastructure, required macro-level trust from business and government clients. Their public-facing dashboards show real-time grid frequency and load data making operational performance directly observable. The open data API allows independent verification. Trust built not through reassurance copy, but through radical operational transparency.

In short:

Trustworthy UX = Cognitive clarity + Emotional reassurance + Institutional accountability.

10. Trust Under Emerging Technological Pressure

The conditions under which trust must be designed are changing faster than most regulated organisations are adapting.

Explainable AI is the most immediate design challenge. As AI systems are embedded in credit scoring, insurance pricing, fraud detection, and healthcare diagnostics, users are increasingly affected by decisions whose logic they cannot access. The EU AI Act will codify explainability requirements that are already becoming user expectations. The design question is not whether to surface AI reasoning it is how to surface it in ways that are meaningful to non-technical users without misrepresenting the underlying model.

Zero-trust security architectures, which require verification at every interaction boundary, create new design problems. Every verification step is a potential trust friction point. The UX discipline is to frame that friction accurately as evidence of protection rather than evidence of system distrust of the user and to calibrate it to actual risk levels rather than applying it uniformly.

The EU AI Act and Digital Services Act together establish a trajectory that UX designers working in regulated environments need to track: trustworthiness by design is moving from a professional standard to a legal requirement. The organisations that have invested in the foundations described in this article are better positioned for that transition than those still treating trust as a soft outcome of good visual design.

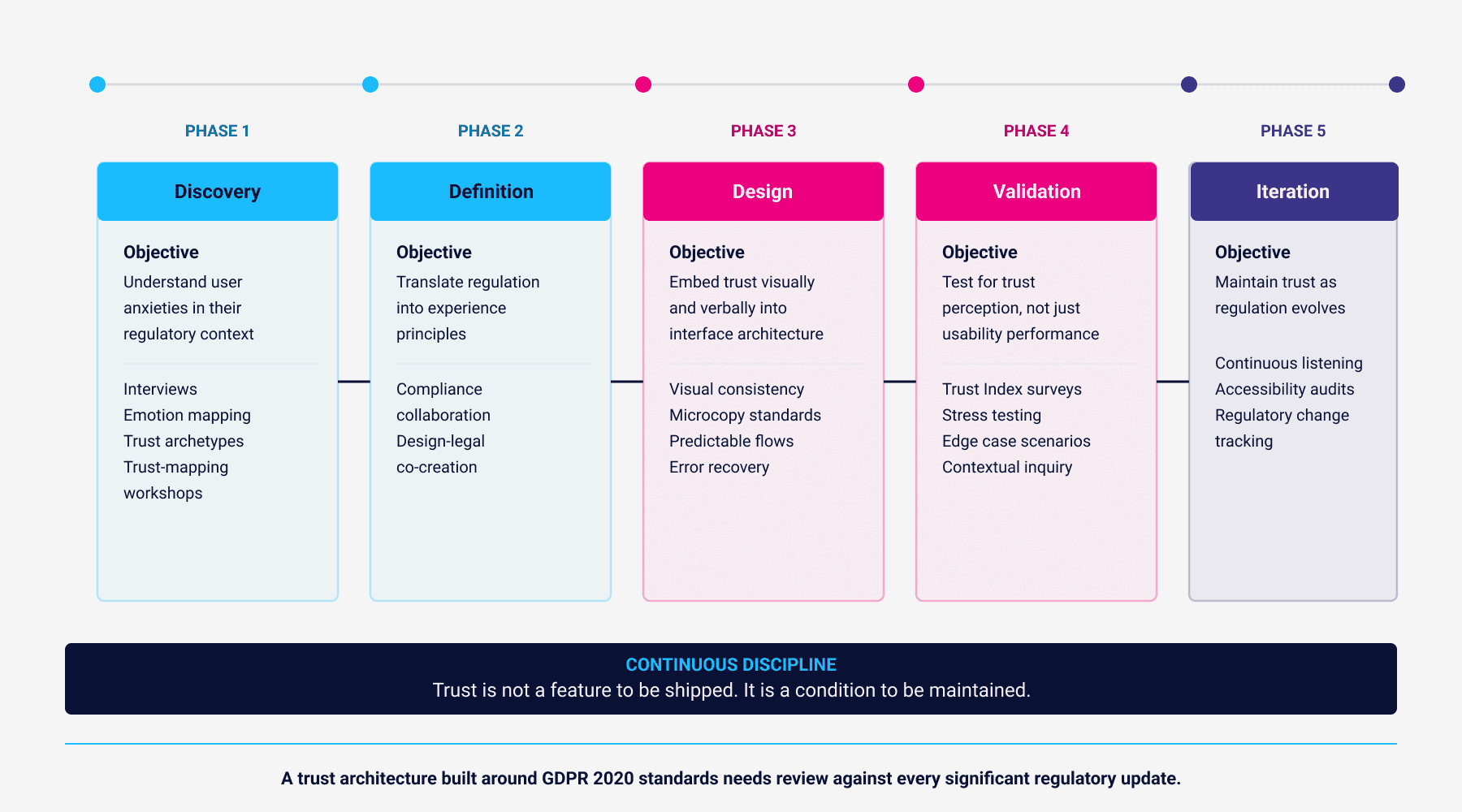

11. A Framework for Trust by Design

Building trust in regulated products is not a single-phase project. It is a continuous operating discipline that requires structured investment across the full product development cycle.

The discovery phase is about understanding user anxieties in their specific regulatory context not generic user research, but targeted investigation into what users fear, what they are uncertain about, and where their current trust model is already fragile.

The definition phase is where design and legal functions must collaborate directly. Compliance requirements that arrive as documents at the end of a design sprint become friction. Compliance requirements that arrive as design constraints at the beginning of one become trust opportunities.

The design phase is where transparency, predictability, and accountability are embedded into interface architecture not as afterthoughts, but as structural decisions about information hierarchy, state communication, confirmation patterns, and error recovery.

Validation in this context means testing for trust perception, not just usability performance. A task completed with residual doubt about its accuracy is not a successful interaction. Stress testing presenting users with edge cases, failure states, and ambiguous situations reveals trust architecture failures that standard usability testing misses.

Iteration must account for regulatory change. A trust architecture built around GDPR 2020 standards needs review against every significant regulatory update. The systems that maintain trust over time are the ones with continuous monitoring processes, not one-time validation.

About the Author

Chemsseddine SALEM is a Lead UX Designer and Researcher specialising in Enterprise SaaS, UX Governance, Finance, and Energy sectors. He is the founder of Chemss Labs and works with organisations navigating large-scale digital transformation in regulated environments.

About

Featured Posts